The Last Commodity

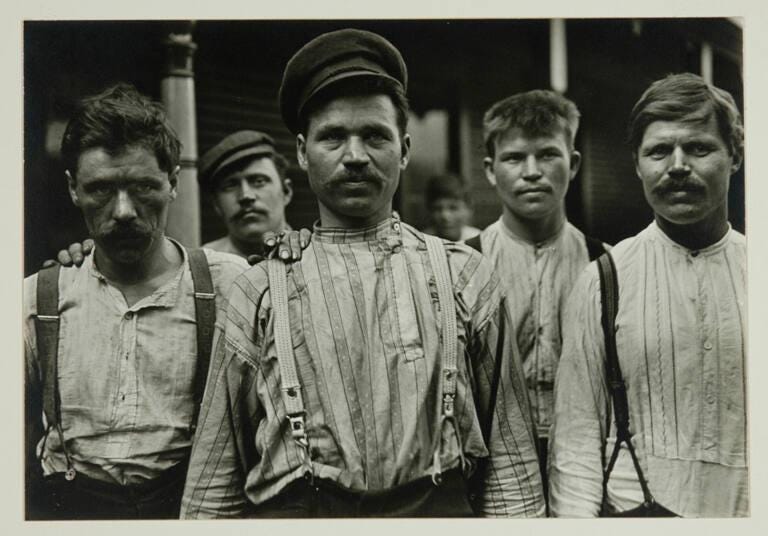

Somewhere, a photograph like this is being taken of you.

The headlines about artificial intelligence are not grim in any simple way — they do not announce a war or a recession. They announce something stranger: that a set of capabilities which we once believed to be irreducibly human — reasoning, writing, coding, designing, analyzing — are being systematically replicated by machines, and that those machines are getting better at an uncomfortable rate. The reaction most people have, encountering this through the filter of social media and breathless technology coverage, oscillates between two equally useless poles: existential dread and breezy dismissal. Neither is adequate. And the advice filling the space between them — learn AI, embrace the tools, stay curious — is so vague as to be nearly insulting.

What the moment demands is something harder. A serious attempt to think through what is actually happening, what has happened before when it looked something like this, and what it means for how an ordinary person is supposed to live and work. Not reassurance. Not panic. An argument

Let us try to do that thinking here.

I. The Thing That Is Actually Happening

Before the economic theory, before the historical analogies, it is worth being precise about what is actually being built. The shift that has unsettled even people who work inside the technology industry is not simply that AI models have become more capable — it is that they have become agentic. Earlier AI systems were powerful but passive: you gave them a prompt and they returned a response. The systems being deployed now can be given a goal and left to pursue it, calling tools, writing and executing code, browsing the web, drafting and revising documents, and checking their own work along the way. They do not just answer questions about how to build software; they build the software themselves.

This is a meaningful difference, and it explains why the familiar reassurance — “AI is just a tool, like a calculator, like a search engine” — has begun to ring hollow. A calculator does not replace an accountant; it makes an accountant faster. But an agent that can receive a brief, research the relevant regulations, draft the filing, identify errors, and revise accordingly is not simply making an accountant faster. It is absorbing a substantial portion of what an accountant actually does all day.

The effect of this is already legible in stock markets. When Anthropic released its Claude Code Security feature, major cybersecurity stocks dropped by up to about 10 percent over a few trading days, wiping out billions in market value on the fear that an AI assistant could automate a chunk of what they sell today. The remarkable thing about that fact is that Anthropic is primarily an AI research company; the security feature is, in corporate terms, much closer to an add‑on than a dedicated security business line.

Adobe has watched its stock price erode as AI image generation has democratized capabilities that once required years of training and a Creative Cloud subscription, falling more than 20 percent this year and over 40 percent from its 52‑week high as investors reassess how defensible its creative‑software moat really is in an era of cheap, good‑enough generative tools.

Infosys, IBM, and the cohort of large business‑process outsourcing firms that built their business models around providing human cognitive labor at scale — workflow design, software development, document processing — are now restructuring around generative‑AI and automation, cutting thousands of back‑office roles and rebranding themselves as AI‑enabled transformation partners, which is another way of saying that their old value proposition no longer exists in quite the form it once did.

What all of this reflects is a pattern that economic historians recognize immediately, even if the technology press tends to rediscover it as though it were novel: the commoditization of a previously scarce and expensive resource.

II. The Long History of Making Things Cheap

Every major technological revolution can be understood, at its core, as an act of commoditization. The industrial revolution commoditized physical labor. The steam engine did not make human muscles irrelevant — it made raw physical force cheap enough that the scarcity which had previously organized entire economies dissolved. The internet commoditized information and distribution. Before it, the barriers to publishing, to accessing a library’s worth of knowledge, to reaching a global customer base were substantial and meaningful. After it, they collapsed. Software commoditized process — it took the expensive, specialized knowledge required to run complex business operations and encoded it in systems that could be replicated for near-zero marginal cost.

The pattern in each case is the same: something that was scarce becomes abundant. Something that required specialized expertise becomes accessible. And in every instance, the immediate reaction from the people whose expertise is being commoditized is a version of the same argument — that what machines can do is merely mechanical, that the truly human, truly creative, truly valuable work will remain protected. Sometimes they are right about what is protected. They are almost always wrong about how far the commoditization reaches.

What AI is commoditizing is cognition itself — not all cognition, and not permanently, but specifically the kind of structured, repeatable, information-processing cognition that constitutes a very large fraction of white-collar work as it is currently organized. Legal research. Software development. Graphic design. Data analysis. Financial modeling. Content creation. Customer support. The ability to take a well-specified problem, process relevant information, and produce a well-structured output — this is being commoditized at a pace that would have seemed implausible even five years ago.

“What AI is commoditizing is cognition itself — the kind of structured, repeatable, information-processing cognition that constitutes a very large fraction of white-collar work.”

III. Why Marx Was Wrong Then, and Why His Ghost Is Wrong Now

In 1867, Karl Marx published the first volume of Das Kapital, a work of extraordinary intellectual ambition that attempted to demonstrate, through systematic economic analysis, that industrial capitalism contained the seeds of its own destruction. The argument, compressed considerably, went something like this: as capitalists competed with one another, they would replace human labor with machinery. This would increase productivity but reduce wages. Workers, earning less, would consume less. Demand would fall. Firms would respond by automating further to cut costs. A deflationary spiral would follow, immiserating the working class and eventually producing the conditions for revolutionary upheaval.

It was a remarkable piece of analysis. It identified real tendencies within capitalist economies. And its core prediction — that capitalism would destroy itself through the very logic of its own efficiency — was wrong in ways that took decades to fully understand.

Marx made an error that is easy to see in retrospect but difficult to resist in the moment: he assumed that the structure of demand was fixed. He saw workers displaced from agriculture moving into factories and struggled to envision where they might go next, because the industries that would eventually absorb them — services, entertainment, healthcare, technology — either did not exist yet or existed only in embryonic form. He could not see what would be created, only what was being destroyed, and so his model of the future was essentially the present with its internal tensions accelerated to their logical conclusion.

A recent research note from Citrini Research has attracted considerable attention for making a structurally similar argument about AI. The paper’s logic runs as follows: as AI agents replace labor, the wage bill falls; as the wage bill falls, consumption falls; as consumption falls, firms face reduced demand and automate further to protect margins; this produces a deflationary spiral in which the productivity gains of AI accrue almost entirely to capital while labor is left with nothing to consume and nothing to sell. The argument is sophisticated and not without merit as a description of a possible failure mode. But it inherits Marx’s core error. It assumes that demand is fixed — that the things people want are already known and merely distributed between those who can and cannot afford them, rather than endlessly invented by the collision of new capabilities with human creativity and desire.

The internet, to take only the most recent example, was supposed to destroy the media industry. In a narrow sense, it did — classified advertising, which had sustained local newspapers for a century, evaporated almost overnight, and the newspaper industry has never recovered. But the internet also created the conditions for streaming music, for podcasting, for YouTube, for the creator economy, for the entire apparatus of digital entertainment and information that now constitutes an industry orders of magnitude larger than anything it replaced. The musicians who lost revenue from physical album sales did not, as a rule, respond by ceasing to make music. They adapted, experimented, and ultimately found that the collapse of one distribution model had opened space for many others.

IV. Schumpeter’s Gale

The thinker who actually understood this process — who grasped that capitalism’s defining feature was not its tendency toward equilibrium but its tendency toward perpetual, violent disruption — was Joseph Schumpeter, the Austrian-American economist who spent much of his career in productive argument with both the Marxists and the neoclassical economists of his era.

Schumpeter’s great contribution was the concept he called “creative destruction” — the observation that capitalism progresses not through the smooth optimization of existing production but through the constant introduction of new combinations of resources, capabilities, and ideas that render previous combinations obsolete. The automobile destroyed the carriage industry. The carriage industry employed coachmen, harness-makers, stable-hands, and a vast supporting apparatus of farriers and feed merchants. All of that was destroyed, and destroyed fairly rapidly, in the early decades of the twentieth century. What replaced it was not unemployment but the automobile industry, with its own enormous ecosystem of workers, suppliers, fuel producers, road builders, and mechanics.

Schumpeter was careful not to be naively optimistic about this process. Creative destruction is painful for those whose skills and industries are destroyed, and the transition between the old equilibrium and the new one can be prolonged and brutal. He did not pretend otherwise. What he insisted on was that the destruction and the creation were not separable — that you could not have one without the other, and that attempts to protect existing industries from disruption tended to retard the creation of new ones without actually saving the old ones from their eventual fate.

This is the framework within which AI ought to be understood. Not as a story in which human labor becomes worthless — because human desire, which is the ultimate source of economic demand, is not bounded in any way that has ever been observed. Not as a story in which nothing changes and everyone simply learns to use AI as a tool. But as a Schumpeterian gale of creative destruction, in which entire categories of work as currently organized will be transformed beyond recognition, the transition will be uneven and in some respects brutal, and what will emerge on the other side is something we can sketch in outline but cannot see in detail.

V. What Actually Happens When the Barriers Fall

History offers us a reasonably reliable prediction about one thing: when the cost of creation falls dramatically, the volume of creation expands dramatically, and some fraction of that expanded creation turns out to be genuinely valuable in ways that were not anticipated.

The desktop publishing revolution of the 1980s is instructive. Before it, producing a professionally typeset document required access to specialized equipment and trained operators. After it, anyone with a personal computer could produce something close enough for most purposes. The professional typesetters who were displaced were not wrong to feel threatened — their specific skill had been commoditized. But desktop publishing did not reduce the total amount of designed material in the world. It expanded it enormously, created entirely new categories of designed artifacts, and ultimately required more people working in visual communication than had worked in it before, even though the barrier to entry had fallen.

The same story unfolded with video. The arrival of affordable digital cameras, and then smartphones, and then the tools to edit and distribute video without a broadcast license, did not destroy the film and television industries. It created an entirely new layer of video production underneath them — YouTube, then TikTok, then the vast informal economy of creators — that in aggregate employs more people in video production than Hollywood ever did, while Hollywood itself continues to exist and, in its streaming form, to expand.

The underlying logic is not complicated. When something becomes easier to do, more people do it. When more people do it, the total output increases. The quality distribution of that output shifts — there is more excellent work, more mediocre work, and more outright slop, all coexisting in a volume that would not have been possible before. The slop does not crowd out the excellent work in any durable way, because excellent work has always found audiences willing to seek it out.

AI is following this pattern in real time. The people who are most anxious about it are, in many cases, those watching it commoditize the specific things they do. The people who are most energized by it are those discovering that it has removed barriers between them and something they always wanted to build or create but could not previously access. A person who is not a software engineer but has always had ideas for software can now build software. A person who is not a graphic designer but has a clear visual idea can now produce something close to it.

The counterargument to this optimism is real and deserves more than a sentence. The Luddites are remembered today as a byword for technophobia — people who smashed looms because they feared progress. This is almost entirely wrong. The Luddites were not ignorant of technology; they were skilled textile artisans who understood precisely what the new machinery would do to their livelihoods, organized against it deliberately, and were ultimately crushed by a combination of military force and economic inevitability. The framework of creative destruction explains, at the aggregate level, why the textile industry eventually employed more people than the hand-weavers it displaced. It does not explain what happened to the specific men who lost their specific craft. Many of them did not gracefully retrain. Many of them simply had a harder life. Some of them had a very hard life indeed. The same is true of the coachmen who did not become automotive engineers, the typesetters who did not become graphic designers, the travel agents who did not become UX researchers. Schumpeter’s gale is not selective about who it catches. And the people who are standing in its path right now are not abstractions.

VI. The Question You Are Actually Asking

All of this is, in a certain sense, prologue to the question that anyone reading about AI and feeling uncomfortable is actually asking, which is not about Marx or Schumpeter or the long arc of economic history. It is much simpler: What does this mean for me?

The advice most commonly dispensed — “learn AI,” “embrace the tools,” “stay curious” — is not wrong exactly, but it is asking you to think about this the wrong way. It frames AI as a subject to be studied, a credential to be acquired, a defensive posture to be adopted. But the people who are actually thriving in this transition are not doing so because they studied AI. They are doing so because they had something worth multiplying — and AI is, above all else, a multiplier.

This is the reframe that matters. AI does not add capability to people uniformly. It multiplies what is already there. A person with genuine judgment, original perspective, real relationships, or a specific hard-won understanding of how some corner of the world actually works — that person, with AI handling the surrounding infrastructure of their work, can now produce at a volume and quality that would have been impossible before. The bottleneck that constrained them was execution, and execution has gotten dramatically cheaper. But a person without those things, with AI, still has none of those things. The gap between someone with real insight and someone without it has not narrowed. In many domains it has widened, because the volume of output has increased and the scarce thing is no longer the ability to produce — it is having something worth producing.

The honest personal question, then, is not “how do I learn AI” but “what do I have that is worth multiplying?” And this is a harder question, because it requires an honest inventory of where your actual value lies — not your job title, not the tasks that fill your calendar, but the thing that would be genuinely difficult to replace because it comes from who you specifically are, what you have specifically experienced, and what you have specifically figured out. The people who can answer that question clearly are in a fundamentally different position from those who cannot, and no amount of prompt engineering changes that underlying reality.

For those who are early enough in their careers to still be deciding what to build — the framing shifts again. The question is not which skills are safe from AI, because that is a defensive question that will keep losing as the frontier advances. It is which capabilities, developed now, will give you something genuinely worth multiplying in a world where the tools to multiply it will be orders of magnitude more powerful than they are today. The capabilities that have historically retained that quality through disruption — the ability to understand what people actually want before they can articulate it, the capacity to hold institutional trust that takes years to build, the willingness to take responsibility for outcomes rather than just producing outputs, the judgment to know when the tool’s answer is confidently wrong — none of these are skills in the conventional sense. They are orientations. And they are not acquired by taking a course.

VII. What Gets Created in the Space

There is one more thing worth saying, which is harder to argue for rigorously but may be the most important.

Every previous wave of technological disruption has not only displaced existing work but created conditions for kinds of creation that were not previously possible, and that expanded what it meant to participate in the economy as something other than a passive consumer. The printing press did not only displace scribes; it created the conditions for the pamphlet, the novel, the scientific journal, the newspaper — entire forms of human expression and knowledge-sharing that did not previously exist and that ultimately made the world far more rich in ideas than it had been when knowledge was scarce and copying it was laborious.

AI is creating the conditions for something analogous, though it is not yet clear exactly what form it will take. The barrier between having an idea and being able to execute it is collapsing across domain after domain. This is already changing what it means to be an individual in the economy — not just an employee executing someone else’s vision but potentially a producer of things that are genuinely yours, built with AI as a collaborator rather than with a corporation as a gatekeeper.

Whether this possibility is fully realized depends not just on the technology but on a variable the optimistic version of this story tends to underweight: who captures the surplus.

This is where the Schumpeterian framework carries a hidden assumption that needs to be made explicit. Creative destruction generates new demand and new industries only if the productivity gains from the destruction circulate broadly enough to become someone else’s income, which becomes someone else’s consumption, which becomes the demand that makes the new industries viable. When the gains are too concentrated — when they accrue almost entirely to capital and almost not at all to labor — the cycle breaks. The new industries don’t grow fast enough because the money isn’t moving. This is not a hypothetical. The Gilded Age, the closest historical analogue to a period of rapid, concentrated technological and industrial gain, produced extraordinary aggregate wealth and extraordinary aggregate misery simultaneously, and it required decades of political conflict — antitrust legislation, labor law, progressive taxation, the New Deal — to redistribute the gains broadly enough to generate the consumer demand that sustained the next wave of growth. The question of whether AI’s gains circulate or concentrate is not a technological question. It is a political one. And unlike the question of what the technology will do, this one is still genuinely undecided.

This is, perhaps, the most useful reframe available. The story of AI is not finished and its ending is not inevitable. The question is not simply what AI will do to us, as though we are passive recipients of a fate being designed elsewhere. The question is what we will do with AI, and around AI, and in response to AI — and that question is still genuinely open, answered differently depending on decisions being made right now by people who may or may not understand the weight of what they are deciding.

History has never rewarded the people who, facing a wave of creative destruction, insisted on preserving the precise form of work they had always done. It has, with some consistency, rewarded those who understood what the wave was actually destroying — not their labor, not their creativity, not their judgment, but the specific organizational form in which those things had previously been packaged — and who found new packages. That process is rarely painless. It rarely moves at the pace that those caught in it would prefer. And it does not take care of the people standing in the wrong place at the wrong time. That is not nothing, and anyone who tells you otherwise has resolved the tension too cheaply.

But here is what the record also shows: every previous time a fundamental resource was commoditized, the world on the other side had more room for human endeavor than the world before it — more things being made, more ideas in circulation, more people participating in creation rather than just consumption. There is no law guaranteeing this continues. There is, however, considerable evidence that it is the most likely outcome, and that acting on the alternative — retreating, protecting, waiting for the wave to pass — has never once worked.

The machines are getting better at cognition. So, when we stop being afraid of them long enough to actually use them, are we.

This article draws on the economic frameworks of Joseph Schumpeter's Capitalism, Socialism and Democracy (1942) and Karl Marx's Das Kapital (1867), alongside recent market analysis of AI sector effects on incumbent industries. It is also indebted to the hundreds of reactions, arguments, and counter-arguments the author encountered on X — the doomers, the techno-optimists, and the genuinely uncertain alike — whose collective attempt to make sense of this moment is, in many ways, what the piece is a response to.